The key to unlocking predictive maintenance isn’t just buying sensors; it’s making a series of strategic trade-offs that align with your specific operational goals and budget.

- Successful implementation balances sensor types (vibration vs. acoustic), data processing locations (edge vs. cloud), and connectivity protocols (wired vs. wireless).

- Calculating ROI from the start is non-negotiable and frames every technical decision, with pilot projects often breaking even after a single prevented failure.

- Data integrity is paramount; uncalibrated sensors and insecure endpoints can undermine the entire system, turning a strategic investment into a liability.

Recommendation: Begin by identifying your 3-5 most critical assets, define their most common failure modes, and then select the sensor and connectivity technology best suited to predict those specific failures—not the other way around.

On the factory floor, unplanned downtime isn’t just an inconvenience; it’s the primary enemy of productivity and profitability. For years, the standard response has been reactive—fix it when it breaks—or at best, preventive, replacing parts on a fixed schedule whether they need it or not. Many plant managers hear the buzzwords “Industrial IoT” and “Predictive Maintenance” and are told the solution is simply to install more sensors and collect more data. This often leads to pilot projects that drown in data but produce few actionable insights.

But what if the real key wasn’t just collecting data, but making intelligent, strategic trade-offs at every step of implementation? The path to a successful predictive maintenance (PdM) program lies not in a one-size-fits-all solution, but in a series of deliberate choices tailored to your specific environment, assets, and budget. It’s about understanding that the “best” sensor is the one that detects a specific failure mode, the “best” network is the one that fits your factory’s electromagnetic environment, and the “best” architecture is the one that delivers actionable alerts before a catastrophic failure occurs.

This guide is a field manual for plant managers, not a theoretical treatise. It cuts through the noise to focus on the practical decisions you’ll face. We will explore the critical trade-offs in sensor selection, data processing, and network architecture. We’ll provide a clear framework for calculating your return on investment and delve into the often-overlooked but critical issues of sensor accuracy and system security. By the end, you will have a clear roadmap for transforming your maintenance strategy from a cost center into a competitive advantage.

To navigate these critical decisions, this article is structured to guide you through each stage of planning and implementation. The following sections break down the key technical and financial considerations for building a robust and profitable predictive maintenance ecosystem.

Summary: Industrial IoT Sensors: How to Implement Predictive Maintenance in Manufacturing?

- Vibration vs Acoustic Sensors: Which Predicts Motor Failure Better?

- How to Shield Sensor Data Cables in High-Voltage Environments?

- Edge Computing: Processing Sensor Data Locally to Reduce Latency

- Reactive vs Predictive: Calculating the ROI of IoT Implementation

- Sensor Drift: Why Uncalibrated Sensors Lead to Production Errors?

- FPGA vs ASIC: Which Hardware Accelerates Crypto Mining Better?

- Wi-Fi vs LoRaWAN: Which Protocol Fits Remote Sensor Networks?

- Connected IoT Ecosystems: How to Secure Thousands of Endpoints Effectively?

Vibration vs Acoustic Sensors: Which Predicts Motor Failure Better?

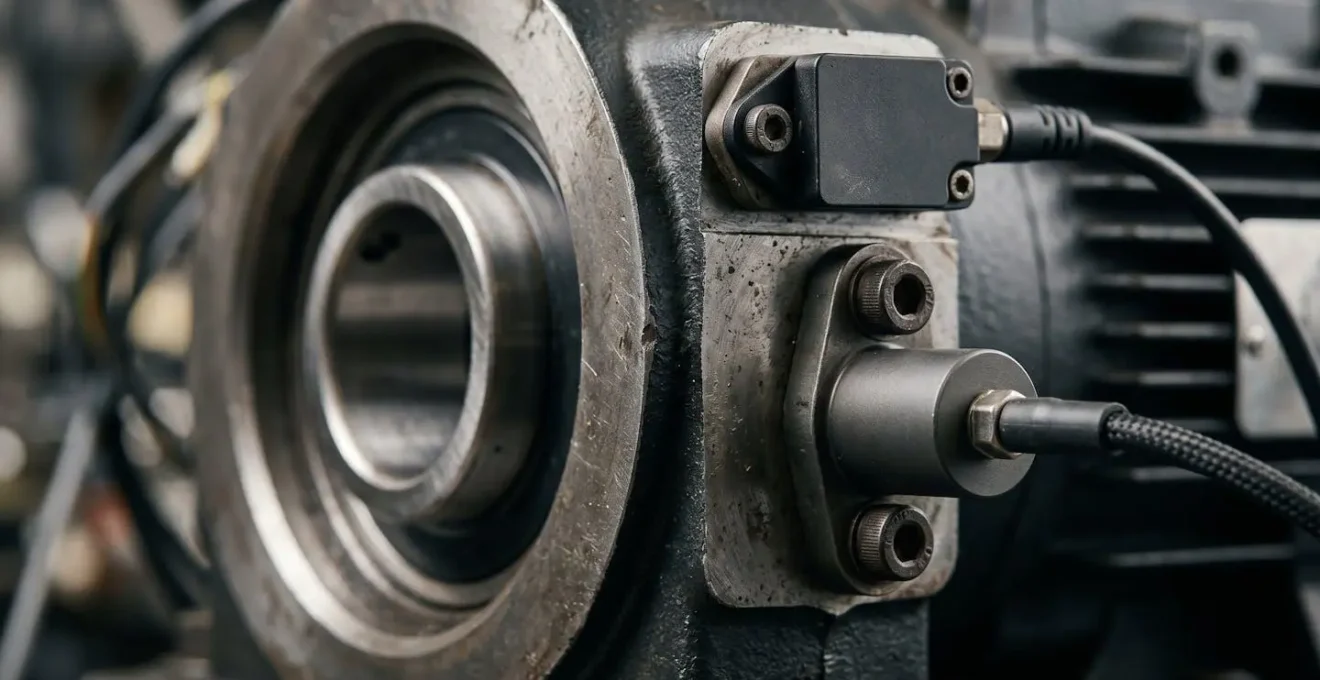

The first decision in any motor monitoring program is often choosing between vibration and acoustic sensors. Vibration sensors (accelerometers) are the industry standard, excellent at detecting physical imbalances, bearing wear, and misalignment by measuring changes in acceleration. They provide a direct, physical indication of a machine’s health. Acoustic sensors, on the other hand, listen for high-frequency ultrasonic waves generated by friction, turbulence, or electrical arcing—often the very first signs of a problem, appearing long before any detectable vibration.

The choice is a classic trade-off. Vibration analysis is well-understood and effective for late-stage failure detection. Acoustic analysis can provide earlier warnings but may be more susceptible to background noise. However, the most advanced strategies don’t treat this as an “either/or” choice. Modern research demonstrates that better predictive performance is achieved by fusing data from multiple sensors. Combining the early warnings from acoustic emissions with the physical confirmation from vibration sensors creates a much more reliable and comprehensive picture of asset health.

As this dual-sensor configuration illustrates, the goal is to capture a richer dataset. This approach builds operational resilience; if one sensor stream is compromised or fails, the other can still provide valuable data. A study on drive train monitoring confirmed this, showing that a data fusion approach significantly improved the accuracy of damage classification and enabled defect detection even when one sensor was offline. For critical assets, the incremental cost of a second sensor is minimal compared to the cost of a missed failure.

How to Shield Sensor Data Cables in High-Voltage Environments?

A common headache on the factory floor is protecting sensitive sensor signals from electromagnetic interference (EMI), especially in environments with high-voltage equipment, VFDs, and welding operations. The traditional solution involves meticulous planning of shielded cables, proper grounding, and physical separation from power lines. While effective, this can be costly, complex, and inflexible, especially when retrofitting an existing plant or deploying sensors in hard-to-reach locations.

Here, a strategic pivot is to question the premise: why use cables at all? An Industry 4.0 consultant would ask you to consider the trade-offs of going wireless. Modern wireless protocols are designed with high EMI resilience and can completely bypass the challenges of physical cabling. The decision then shifts from “how to shield” to “which wireless protocol is right for this application?” Each protocol offers a different balance of range, bandwidth, and power consumption.

The following table outlines the trade-offs between major wireless protocols for industrial sensor networks, helping you select the right tool for the job.

| Protocol | Range | Bandwidth | Power Consumption | EMI Resilience | Use Case |

|---|---|---|---|---|---|

| LoRaWAN | 1-15 km | Low (0.3-50 kbps) | Very Low | High | Widespread condition sensors, remote assets |

| WirelessHART | 10-250 m | Medium (250 kbps) | Low | High | Process control, mesh networks |

| Wi-Fi 6 | 50-100 m | High (600+ Mbps) | High | Medium | Video analytics, high-data applications |

| 5G/NB-IoT | 1-10 km | Medium (100+ kbps) | Medium | Very High | Mobile assets, remote sites, failover |

For widespread, low-data-rate condition monitoring (like temperature or pressure on non-critical assets), a low-power, long-range protocol like LoRaWAN is ideal. For high-data applications like video-based quality control, Wi-Fi 6 is a better fit. By reframing the problem, you can turn a cabling nightmare into a strategic advantage, deploying sensors faster and more flexibly than ever before.

Edge Computing: Processing Sensor Data Locally to Reduce Latency

Once your sensors are collecting data, the next critical decision is where to process it. Sending every raw data point from thousands of sensors to a centralized cloud server can create significant challenges with bandwidth costs, data storage, and, most importantly, latency. For predictive maintenance, a delay of even a few seconds between a critical event and an alert can be the difference between a minor adjustment and a catastrophic failure. This is where edge computing becomes a game-changer.

Edge computing involves processing data on or near the device where it is generated, rather than in a distant cloud. An edge gateway on the factory floor can analyze data from local sensors in real-time, running machine learning models to detect anomalies instantly. Only relevant results, alerts, or summaries are then sent to the cloud for long-term storage and analysis. This approach dramatically reduces latency, lowers network traffic, and ensures that critical operations can continue even if the connection to the cloud is temporarily lost. The impact on the bottom line is direct, as smart factories using edge computing achieve up to a 50% reduction in unplanned downtime.

Case Study: Siemens Industrial Edge in Automotive Manufacturing

A leading automotive supplier implemented Siemens Industrial Edge across multiple facilities to bring data analysis closer to the production line. By processing data locally, they could monitor and adjust processes in real-time. The results were significant: an 18% reduction in scrap and waste, a 12% boost in Overall Equipment Effectiveness (OEE), and a complete return on investment within just 9 months, driven by increased productivity and cost savings from localized, low-latency data analysis.

The trade-off here is between the simplicity of a pure cloud architecture and the resilience and speed of an edge or hybrid model. For any application where real-time response is critical—such as high-speed assembly lines or safety-critical systems—the investment in edge processing capability pays for itself by preventing even a single major outage.

Reactive vs Predictive: Calculating the ROI of IoT Implementation

For any plant manager, the most important question is: “What’s the return on this investment?” Moving from a reactive (“run-to-failure”) or preventive (schedule-based) maintenance model to a predictive one requires upfront investment in sensors, software, and training. The justification lies in a clear, compelling ROI calculation that frames PdM not as a cost, but as a high-yield investment. The numbers are compelling; the U.S. Department of Energy documents a 10:1 ROI on predictive maintenance, with a 70-75% reduction in equipment breakdowns and 25-30% lower maintenance costs.

The core of the ROI calculation is simple: compare the total cost of your current maintenance strategy (downtime hours, lost production, expedited parts, labor overtime) with the projected savings and costs of a PdM program. The savings come from:

- Reduced Unplanned Downtime: The largest single contributor to ROI.

- Optimized MRO Inventory: Order parts just-in-time instead of holding expensive safety stock.

- Increased Labor Efficiency: Technicians work on actual problems, not scheduled tasks or false alarms.

- Extended Asset Life: Proactive maintenance extends the useful life of critical equipment.

A phased implementation allows you to demonstrate value quickly. Start with a small pilot on 5-10 of your most critical—or most problematic—assets. Often, preventing just one major failure can pay for the entire pilot project for several years. Once you prove the ROI on a small scale, securing the budget to expand the program across the facility becomes a much simpler conversation.

Sensor Drift: Why Uncalibrated Sensors Lead to Production Errors?

A predictive maintenance system is only as reliable as the data it receives. A common but dangerous assumption is that once a sensor is installed, it will remain accurate forever. In reality, all sensors are subject to “drift”—a gradual, often imperceptible deviation from their calibrated measurement over time. This can be caused by aging, temperature fluctuations, or harsh operating conditions. When a sensor drifts, it sends back faulty data, leading your AI models to see “ghost” anomalies or, even worse, miss the signs of a genuine impending failure.

The consequences are costly and erode trust in the entire system. Maintenance teams are dispatched to chase problems that don’t exist, wasting valuable time and resources. In fact, industry studies reveal that up to 63% of instrument-related maintenance calls find no problems with the suspected equipment, with a staggering 75% of control valves pulled for maintenance not actually needing it. This is often a direct result of relying on uncalibrated, drifting sensor data.

In chemical and natural gas processing, every hour of downtime incurs a six-figure price tag, and each inaccurate sensor reading poses a risk, both of which can be avoided.

– Siege Engineering, Sensor Drift vs. Bad Equipment: A Diagnostic Playbook for Ops and Maintenance

The solution is not to abandon sensors, but to build a robust calibration and validation strategy into your maintenance plan from day one. This includes periodic checks against a known “golden” standard, using redundant sensors to cross-validate readings, and implementing software algorithms that can detect and flag potential sensor drift. Treating your sensors as critical assets that require their own maintenance schedule is the only way to ensure the long-term integrity and reliability of your predictive maintenance program.

FPGA vs ASIC: Which Hardware Accelerates Crypto Mining Better?

While the headline-grabbing application for FPGAs and ASICs has been in cryptocurrency mining, their real value for an Industry 4.0 consultant lies in their ability to accelerate AI workloads at the edge. As your predictive maintenance program matures, you may find that the CPU in your edge gateway is insufficient for running complex machine learning models in real-time. This is where specialized hardware becomes the next strategic trade-off.

An FPGA (Field-Programmable Gate Array) is a highly flexible chip that can be reprogrammed after manufacturing. This makes it ideal for the pilot and early adoption phases of a PdM project, where your AI models are constantly evolving and improving. You can update the hardware’s logic to match your new algorithms without replacing the physical chip.

An ASIC (Application-Specific Integrated Circuit), by contrast, is custom-designed for one specific task. It offers the highest possible performance and energy efficiency but is completely inflexible. Development is slow and expensive, making it suitable only for mature, large-scale deployments where the AI model is stable and will be rolled out across thousands of identical units. The choice between them is a classic trade-off between flexibility and performance-at-scale.

This table compares the two technologies specifically for their use in accelerating edge AI within a predictive maintenance context.

| Characteristic | FPGA (Field-Programmable Gate Array) | ASIC (Application-Specific Integrated Circuit) |

|---|---|---|

| Flexibility | High – Reprogrammable for evolving AI models | Low – Fixed hardware design |

| Development Time | Faster (weeks to months) | Slower (6-18 months) |

| Unit Cost | Higher per unit | Lower at volume (10,000+ units) |

| Energy Efficiency | Moderate (inferences-per-watt) | Highest (optimized for specific algorithm) |

| Use Case – PdM | Ideal for pilot phase with evolving models | Best for mature, enterprise-wide deployment |

| Scalability | Good for prototypes and small-scale | Excellent for mass production |

| Thermal Management | Moderate heat generation | Optimized heat dissipation for specific workload |

Wi-Fi vs LoRaWAN: Which Protocol Fits Remote Sensor Networks?

While we’ve discussed wireless as an alternative to shielded cables, the choice of protocol becomes even more critical when dealing with remote or widely dispersed assets. A sensor network spanning a large production facility, an outdoor tank farm, or even a fleet of vehicles has vastly different requirements than a dense cluster of sensors on a single machine. The main trade-off is between high bandwidth (Wi-Fi) and long range/low power (LoRaWAN).

Wi-Fi (especially Wi-Fi 6) is perfect for data-intensive applications over shorter distances. Think real-time video streaming for quality inspection or downloading large diagnostic files from a complex piece of machinery. Its downside is higher power consumption and a more limited range, often requiring multiple access points to cover a large area.

LoRaWAN, on the other hand, is designed for the exact opposite scenario. It sends tiny packets of data (e.g., a single temperature or pressure reading) over very long distances (kilometers) using minimal power. A sensor’s battery can last for years, making it perfect for “set-and-forget” deployments in remote or hard-to-access locations. The compromise is its very low bandwidth; it’s completely unsuitable for streaming or large data transfers.

Case Study: Hybrid Network Architecture in a Smart Factory

The most sophisticated smart factories don’t choose one protocol; they build a hybrid network that leverages the strengths of each. A typical advanced deployment uses a combination of technologies for optimal performance. High-bandwidth Wi-Fi 6 is used for video analytics on the production line. Low-power LoRaWAN is deployed for thousands of condition monitoring sensors spread across the entire campus. Finally, mission-critical machine controls that require guaranteed, rock-solid reliability and latency still rely on wired Ethernet. This tiered approach ensures that every application has the right connectivity for its specific needs without compromise.

Key Takeaways

- Predictive Maintenance is a series of strategic trade-offs, not a single technology purchase. Every choice—from sensor type to security protocol—must be weighed against cost, benefit, and operational reality.

- ROI is the ultimate metric. A successful PdM program is framed as a profit-generating investment, with pilot projects designed to prove value quickly by preventing a single, costly failure.

- Data integrity and security are non-negotiable. An entire PdM system built on inaccurate data from drifting sensors or vulnerable to cyberattacks is worse than having no system at all.

Connected IoT Ecosystems: How to Secure Thousands of Endpoints Effectively?

As you deploy hundreds or thousands of sensors across your facility, you are also creating an equal number of new potential entry points for cyberattacks. Each connected sensor, gateway, and actuator is an “endpoint” that must be secured. The threat is not theoretical; according to Check Point research, 54% of companies experience attempted cyberattacks on IoT devices every week, with manufacturing being a prime target. The financial risk is enormous, as IBM’s 2024 report revealed the average cost of a data breach in the manufacturing sector exceeds $5.5 million.

Securing an IIoT ecosystem goes beyond standard IT security. These are often low-power devices that can’t run complex antivirus software, deployed in physically accessible locations. Effective security relies on a “Zero Trust” principle—never trust, always verify—and managing the entire lifecycle of the device, from initial deployment to secure retirement. This involves network segmentation to isolate your operational technology (OT) from your IT network, end-to-end encryption of all data, and a secure way to push over-the-air (OTA) firmware updates to patch vulnerabilities.

Action Plan: Secure Device Lifecycle Management for Industrial IoT

- Secure Provisioning: Implement device authentication before any network connection is allowed. Use unique cryptographic identities for each sensor and verify device genuineness through a hardware root of trust, establishing a secure onboarding protocol with certificate-based authentication.

- Secure Operation: Deploy end-to-end encryption for all sensor data in transit and at rest. Implement secure over-the-air (OTA) update mechanisms with digitally signed firmware to prevent malicious code injection, and use network segmentation to isolate critical OT devices from the general IT infrastructure.

- Secure Decommissioning: Immediately revoke device credentials and network access upon retirement. Securely wipe all sensitive data and configurations from device memory and maintain a clear audit trail of all decommissioned devices to prevent orphaned endpoints from becoming forgotten security vulnerabilities.

Security cannot be an afterthought. It must be designed into your IIoT architecture from the very first day. Building a secure foundation is the only way to ensure that your predictive maintenance program remains a valuable asset and does not become your biggest liability.

Now that you have the strategic framework, the next logical step is to begin mapping your critical assets to their specific failure modes. Start today by building the business case for a pilot project that will demonstrate clear, quantifiable ROI and transform your approach to maintenance.