Cloud Software

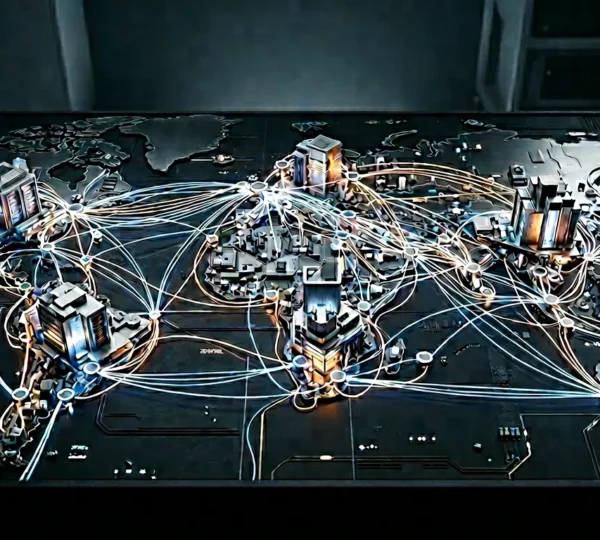

Imagine running a business where your IT infrastructure automatically expands during peak demand and shrinks when traffic drops—all without purchasing a single physical server. This is the transformative promise of cloud software, a paradigm shift that has fundamentally changed how organizations deploy, manage, and pay for computing resources.

Whether you’re a startup founder exploring your first deployment or an IT manager evaluating migration strategies, understanding cloud software is no longer optional. From auto-scaling capabilities that handle unexpected traffic surges to multi-cloud architectures that ensure business continuity, the cloud ecosystem encompasses a rich tapestry of technologies, strategies, and financial models that directly impact operational efficiency and bottom-line results.

This comprehensive resource explores the core pillars of cloud software: scalability mechanisms, virtualization technologies, network integration challenges, public cloud platforms, and the critical shift from capital expenditures to operational spending. Each section provides foundational knowledge while connecting to deeper technical considerations that modern organizations must master.

Why Does Scalability Define Cloud Software Success?

Think of scalability as your infrastructure’s ability to breathe. Just as lungs expand and contract based on oxygen demand, scalable cloud infrastructure adjusts computing resources in real-time based on workload requirements. This elasticity represents perhaps the most significant departure from traditional IT paradigms.

The Cost of Static Capacity

Organizations clinging to fixed server capacity often discover they’re wasting substantial portions of their IT budgets. Studies consistently show that static infrastructure typically operates at only 30-40% average utilization, meaning companies pay for resources sitting idle most of the time. It’s like renting an entire stadium for a weekly meeting of ten people.

Cloud software eliminates this inefficiency by enabling precise resource allocation. When traffic spikes—whether from a viral marketing campaign, seasonal demand, or unexpected media coverage—auto-scaling rules automatically provision additional compute power.

Vertical vs. Horizontal Scaling

Two fundamental approaches exist for expanding capacity:

- Vertical scaling (scaling up): Adding more power to existing machines—more RAM, faster CPUs, larger storage. Simple but limited by hardware ceilings.

- Horizontal scaling (scaling out): Adding more machines to distribute workload. More complex but virtually unlimited in capacity.

Database workloads particularly benefit from understanding this distinction. Relational databases often scale vertically more gracefully, while distributed NoSQL systems are architected for horizontal expansion from the ground up.

Critical Scaling Metrics

Knowing when to scale requires monitoring the right signals. Three metrics typically indicate imminent overload: CPU utilization approaching sustained thresholds, memory consumption patterns, and request queue depths. Misconfigured auto-scaling rules that trigger on momentary spikes rather than sustained load create unnecessary costs and system instability.

How Do Multi-Cloud Strategies Enhance Resilience?

Relying on a single cloud provider is like keeping all your savings in one bank. If that institution experiences problems, your entire financial life freezes. Multi-cloud architectures distribute workloads across providers like AWS, Azure, and Google Cloud, creating redundancy that can literally save businesses during regional outages.

Multi-Cloud vs. Hybrid Cloud

These terms often cause confusion. Multi-cloud refers to using multiple public cloud providers simultaneously. Hybrid cloud combines public cloud services with private data centers or on-premises infrastructure. Both strategies offer redundancy benefits, but they address different organizational needs:

- Multi-cloud excels at avoiding vendor lock-in and leveraging provider-specific strengths

- Hybrid cloud suits organizations with regulatory requirements or legacy systems that cannot move to public infrastructure

- Many enterprises ultimately adopt hybrid multi-cloud approaches combining both strategies

The Data Synchronization Challenge

Multi-cloud architectures introduce complexity, particularly around data consistency. Synchronization mistakes between cloud databases can corrupt critical information, creating scenarios where different systems hold conflicting versions of truth. Proper implementation requires careful consideration of consistency models, replication lag tolerances, and conflict resolution mechanisms.

Establishing secure connections between providers—such as VPN tunnels between AWS and Azure—demands meticulous configuration. Even small firewall misconfigurations can inadvertently expose internal networks or break essential connectivity.

What Role Does Virtualization Play in Cloud Environments?

Virtualization serves as the foundational technology enabling cloud computing. By creating software-based representations of physical hardware, hypervisors allow multiple virtual machines to share underlying resources efficiently. This abstraction layer is why direct hardware access has become obsolete for most enterprise applications.

Hypervisor Selection Considerations

The choice between hypervisor platforms—VMware’s commercial solutions versus open-source options like KVM—impacts scalability, licensing costs, and operational complexity. VMware offers polished management tools and extensive enterprise support. KVM provides flexibility and eliminates licensing fees but requires deeper technical expertise.

Both platforms must address the noisy neighbor problem: situations where one virtual machine’s resource consumption degrades performance for other VMs sharing the same physical host. Proper resource allocation policies and monitoring prevent critical workloads from suffering.

Zero-Downtime VM Migration

Modern virtualization enables moving running virtual machines between physical hosts without service interruption. This capability proves invaluable during hardware maintenance, capacity rebalancing, or infrastructure upgrades. Automated RAM allocation based on real-time usage further optimizes resource distribution across virtualized environments.

Network Integration: Bridging On-Premises and Cloud

Seamless connectivity between traditional data centers and cloud environments determines whether hybrid architectures deliver promised benefits or create frustrating bottlenecks. Network integration encompasses far more than simply establishing connections—it requires optimizing those connections for performance, security, and cost.

Connectivity Options Compared

Organizations typically evaluate several approaches for connecting on-premises infrastructure to cloud platforms:

- VPN connections: Encrypted tunnels over public internet. Cost-effective but subject to internet variability.

- Dedicated connections: Private links like AWS Direct Connect providing consistent throughput. Higher cost but predictable performance.

- SD-WAN solutions: Software-defined approaches that intelligently route traffic across multiple connection types.

The choice depends on workload requirements. Moving petabytes to the cloud takes longer than most organizations anticipate, making initial connection decisions critical for migration timelines.

Route Optimization Principles

Effective network design ensures local traffic stays local. When a user in one region accesses data stored nearby, that request shouldn’t traverse intercontinental links. Proper route optimization reduces latency, improves user experience, and controls egress costs that can otherwise escalate dramatically.

Public Cloud Platforms: Capabilities and Responsibilities

Public clouds—AWS, Azure, Google Cloud Platform—offer compelling advantages for global application deployment. Replicating infrastructure stacks across geographic regions that once required months of planning now happens in minutes. Specialized services for AI workloads, big data processing, and emerging technologies provide capabilities few organizations could develop independently.

The Shared Responsibility Model

A critical misunderstanding persists: many organizations believe cloud providers handle all security concerns. In reality, shared responsibility models mean customers remain accountable for securing their data, configuring access controls, and managing application-level security. The provider secures the underlying infrastructure; everything built on top falls to the customer.

Cost Optimization Strategies

Cloud billing complexity catches many organizations unprepared. Common optimization approaches include:

- Identifying and eliminating zombie resources—unused instances, orphaned storage volumes, and forgotten services that accumulate charges

- Leveraging spot instances for fault-tolerant batch processing, potentially reducing compute costs by up to 90%

- Implementing tagging strategies that enable accurate cost allocation across departments and projects

- Using cloud cost management tools to identify cheaper compute zones across regions

Financial Transformation: From CapEx to OpEx

Cloud adoption fundamentally changes IT financial models. Traditional infrastructure required large capital expenditures—buying servers, storage, and networking equipment upfront. Cloud software shifts this spending to operational expenditures: monthly or hourly fees based on actual consumption.

Strategic Benefits of OpEx Models

This transition offers advantages beyond simple accounting categorization. Organizations gain improved agility metrics, as they can experiment with new services without major capital commitments. Cash flow improves when large upfront purchases convert to predictable monthly expenses.

However, forecasting variable cloud spend requires new competencies. Organizations achieving high forecast accuracy typically implement robust monitoring, establish spending alerts, and conduct regular optimization reviews.

When CapEx Still Makes Sense

Not every scenario favors operational spending. Predictable, steady-state workloads running continuously may cost less on owned hardware over multi-year periods. Some organizations maintain hybrid financial approaches, using owned infrastructure for baseline capacity while bursting to cloud resources during peak demand.

Shadow IT—where departments procure cloud services independently using corporate credit cards—represents a significant challenge. Without centralized visibility, organizations lose negotiating leverage and budget control. Vendor consolidation strategies that merge contracts under unified agreements help reduce administrative overhead while improving cost management.

Understanding cloud software requires grasping these interconnected dimensions: the technical capabilities of scalability and virtualization, the strategic considerations of multi-cloud resilience, the practical challenges of network integration, and the financial implications of consumption-based models. Each dimension influences the others, creating a complex but navigable landscape for organizations committed to mastering modern infrastructure approaches.

Optimizing OpEx Budgets: The Strategic Shift from CapEx to Improve Cash Flow

The shift from CapEx to OpEx is less about accounting and more about embedding financial discipline and consumption-based governance into your technology stack. OpEx is not a universal solution; stable,…

Read more

Beyond the Button: Why Public Cloud is the Ultimate Engine for Global Business Velocity

Going global with public cloud is less about technology and more about mastering the financial and operational models that unlock true speed. Success depends on leveraging dynamic cost models like…

Read more

How to Achieve Seamless Hybrid Cloud Network Integration for Low Latency?

Contrary to common belief, achieving low-latency hybrid cloud performance is not about choosing a single “best” connection, but about mastering the end-to-end data path across both legacy and cloud environments….

Read more

Scalable Virtualization Without the Performance Hit: A Veteran’s Guide

True virtualization performance at scale isn’t about raw power or bigger VMs; it’s about mastering the art of the architectural trade-off. Effective resource scheduling is not about being fair, it’s…

Read more

How to Build Resilient Multi-Cloud Infrastructures That Survive Regional Outages?

Relying on multi-cloud for resilience is a trap; true uptime comes from mastering the failure points between providers, not just using more of them. Single-provider outages are increasing in frequency…

Read more

Why Scalable Cloud Infrastructures Are Vital for Handling 10x Traffic Spikes?

Contrary to common belief, simply enabling auto-scaling does not guarantee survival during a major traffic spike; true resilience comes from a deeply ‘scaling-aware’ architecture designed to preemptively resolve hidden bottlenecks….

Read more