Zero Trust in a legacy environment is not about replacing everything, but about surgically containing inherent risk and building a defensible perimeter around what you cannot change.

- Micro-segmentation acts as an internal firewall, isolating critical legacy assets to contain breaches and prevent lateral movement.

- Zero Trust Network Access (ZTNA) replaces broad network access with granular, application-specific tunnels, drastically reducing the attack surface.

- Mandatory device posture checks ensure that no unpatched or compromised endpoint, whether remote or on-premise, can connect to your internal resources.

Recommendation: Begin not with a complete overhaul, but by identifying a single, high-risk application. Pilot a micro-segmentation and ZTNA strategy around it to demonstrate value and build a repeatable blueprint.

The “castle-and-moat” security model is dead. Every network architect knows this, yet many are still tasked with defending sprawling, aging networks built on that very principle. The industry shouts “Zero Trust!” as the solution, presenting a utopian vision of a secure, modern architecture. We’re told to implement micro-segmentation, transition to Zero Trust Network Access (ZTNA), and base everything on identity. This advice, while correct, often ignores the brutal reality of legacy environments: monolithic applications, flat networks, and hardware that was never designed for dynamic, identity-aware policies.

The core challenge is not understanding the “what” of Zero Trust, but mastering the “how” within an environment that actively resists it. This isn’t a simple technology swap; it’s a fundamental shift in architectural philosophy. The real task for an architect is one of architectural quarantine: treating legacy systems as inherently untrusted and building layers of verification around them. It’s about accepting that you can’t rebuild the city overnight, but you can, and must, build firebreaks to contain the inevitable fires.

This guide moves beyond the “never trust, always verify” mantra to provide a pragmatic roadmap. We will dissect the core pillars of a Zero Trust implementation specifically for legacy networks, focusing on containment strategies, granular access control, and balancing robust security with operational reality. This is not a replacement project; it is a containment and control strategy.

This article provides a structured approach for network architects, breaking down the theory into actionable, strategic components. The following sections detail each critical step in retrofitting a Zero Trust model onto an existing, complex infrastructure.

Summary: A Pragmatic Blueprint for Zero Trust in Legacy Environments

- Why Perimeter Defense Is Dead in the Age of Remote Work?

- Micro-Segmentation: Limiting Lateral Movement After a Breach

- VPN vs ZTNA: Which Provides Granular Access Control?

- The User Experience Friction That Derails Zero Trust Projects

- Device Posture Checks: Denying Access to Unpatched Laptops

- The Firewall Misconfiguration That Exposes Internal Networks

- SMS vs Authenticator Apps: Why SMS 2FA Is No Longer Safe?

- MFA Protocols: How to Balance Security With User Experience?

Why Perimeter Defense Is Dead in the Age of Remote Work?

The concept of a secure corporate perimeter has been rendered obsolete. Its dissolution was accelerated by the massive shift to remote work, which effectively extended the corporate network into thousands of unmanaged home offices. With employees, partners, and contractors accessing critical resources from various locations and devices, the attack surface is no longer a defined boundary but a diffuse, amorphous cloud. Attackers are well aware of this fundamental shift. Once they breach the non-existent perimeter, often through a simple phishing attack, they can remain undetected for extended periods. The global median attacker dwell time was 10 days in 2023, a dangerously long window to explore, escalate privileges, and exfiltrate data.

This new reality means the greatest threat is often no longer getting in, but what happens *after* an attacker is inside. A flat, legacy network is a paradise for an intruder, allowing for unrestricted lateral movement. A compromised laptop on a home Wi-Fi can become a pivot point into the heart of corporate infrastructure. The traditional model, which implicitly trusts any user or device once they are “inside” the network, is a catastrophic failure in this context. It’s a model that assumes a trusted location, but in a world of remote work, there is no such thing.

The principle of “never trust, always verify” must therefore be applied regardless of user location or network origin. Every access request, whether from a coffee shop or the desk next to the server room, must be treated as potentially hostile. This is the foundational mindset required for securing a modern, distributed workforce and marks the definitive end of the perimeter-centric security era. The focus must shift from wall-building to comprehensive and continuous verification.

Micro-Segmentation: Limiting Lateral Movement After a Breach

If we assume a breach is inevitable—and we must—the primary goal shifts from prevention to containment. This is the core purpose of micro-segmentation: the practice of dividing a network into small, isolated security segments down to the individual workload level. In a legacy environment, this is not a feature but a survival mechanism. It’s the architectural equivalent of building fireproof bulkheads in a ship; one compartment may flood, but the entire vessel won’t sink. By implementing strict controls on east-west traffic (communication between servers), you drastically limit an attacker’s ability to move laterally across the network after an initial compromise.

This “architectural quarantine” is especially critical for legacy applications that cannot be easily updated or secured. You can’t fix the application, but you can build a virtual cage around it. This involves defining granular policies that dictate exactly which other workloads the application is allowed to communicate with, on which ports, and using which protocols. Everything else is denied by default. This approach effectively shrinks the “blast radius” of a breach. A compromised web server, for instance, should never be able to initiate a connection to a payroll database server.

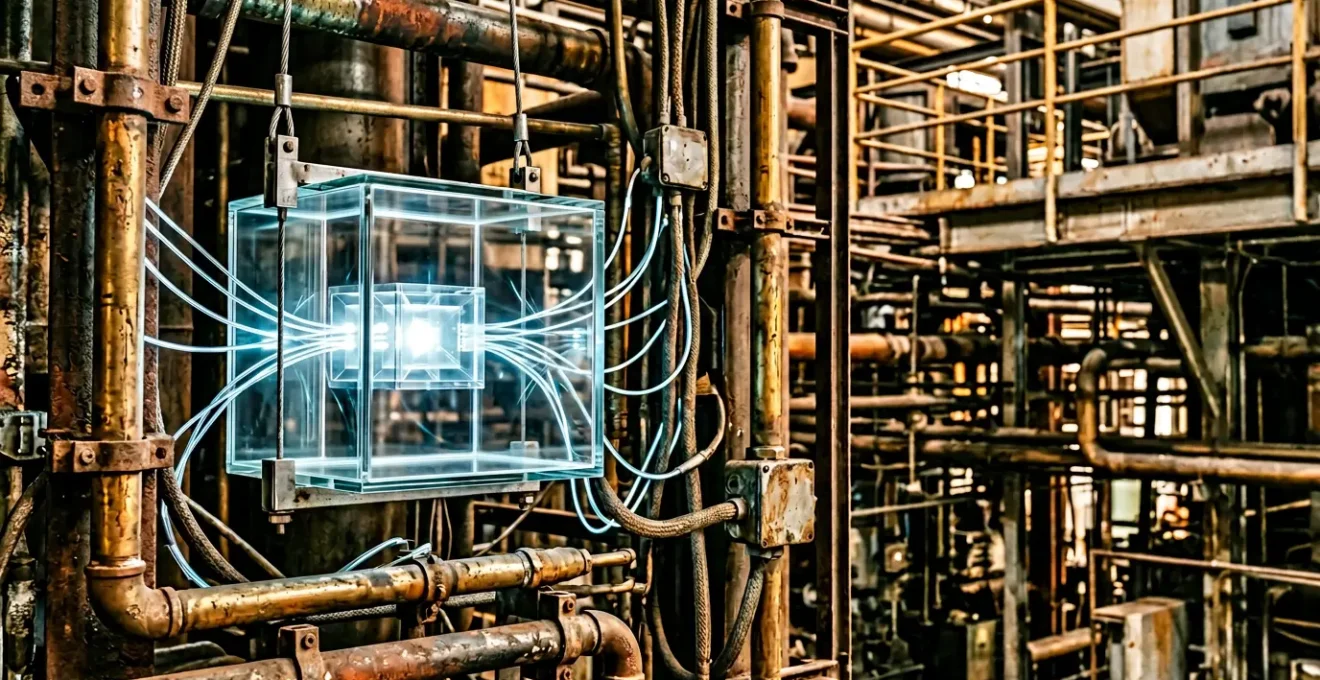

As the visualization suggests, micro-segmentation creates these isolated zones, ensuring that a security event in one area does not cascade into a catastrophic, network-wide failure. For an architect dealing with a flat network, the journey starts with mapping application dependencies to understand legitimate traffic flows before starting to enforce these isolating policies.

Case Study: Manufacturer Establishes Micro-Segmentation for 2,500 Applications

A large manufacturer grappling with over 2,500 applications on a flat network partnered with WWT to implement a robust micro-segmentation strategy. Instead of a disruptive “big bang” approach, the team began with a comprehensive risk assessment of all applications and developed a scoring system. They then piloted a solution with Illumio, focusing on a single customer-service application that had access to 60% of the organization’s infrastructure. This phased and targeted approach allowed the manufacturer to protect its most critical assets first, proving the value of containment without halting business operations and creating a blueprint for the rest of the network.

VPN vs ZTNA: Which Provides Granular Access Control?

For decades, the Virtual Private Network (VPN) has been the workhorse of remote access, providing an encrypted tunnel into the corporate network. However, the VPN model is a classic example of perimeter-based thinking. Once authenticated, a user is effectively “on the network,” often granting them broad access to entire network segments. This creates a significant security risk, as a compromised user account or device becomes an open door for an attacker to explore the internal landscape. Zero Trust Network Access (ZTNA) fundamentally inverts this model.

Unlike a VPN’s broad network-level access, ZTNA provides zero-trust, application-level access. A user is never placed “on the network.” Instead, the ZTNA solution brokers a secure, encrypted, one-to-one connection between the authenticated user and a specific application they are authorized to use. Access is granted on a per-session basis, and only after verifying both the user’s identity and the security posture of their device. This approach adheres strictly to the principle of least privilege, ensuring users can only access the resources explicitly required for their role, and nothing more. The difference in security posture is stark.

The following table breaks down the key distinctions between these two remote access technologies, highlighting why ZTNA is the architectural successor to VPN in a Zero Trust world.

| Feature | VPN | ZTNA |

|---|---|---|

| Access Model | Network-level access | Application-level access |

| Trust Model | Trust after authentication | Never trust, always verify |

| Access Scope | Entire network segments | Specific applications only |

| Lateral Movement Risk | High – attackers can explore network | Low – isolated per-app access |

| User Experience | Slower due to backhauling | Faster direct connections |

| Scalability | Limited by hardware capacity | Cloud-native, elastic scaling |

| Visibility | Limited post-authentication | Continuous monitoring per session |

As confirmed by security experts at Fortinet in their analysis, the shift to ZTNA is a move from a model of implicit trust to one of explicit, continuously verified access, dramatically reducing the remote access attack surface.

The User Experience Friction That Derails Zero Trust Projects

A Zero Trust architecture is technically sound, but its implementation can fail for a very human reason: friction. If security controls are perceived as overly burdensome, users will find workarounds, developers will resist adoption, and the entire project can stall. This is particularly true in legacy environments where employees are accustomed to seamless, albeit insecure, access. Introducing multi-factor authentication, device checks, and more granular permissions can feel like a sudden barrage of obstacles to productivity.

Legacy technologies in general tend to be very static in nature and not designed to handle the dynamic rule sets necessary to enforce policy decisions.

– Imran Umar, Senior Cyber Solution Architect, Booz Allen Hamilton interview with CSO Online

This inherent inflexibility of legacy systems is a major source of friction. As Imran Umar points out, these systems weren’t built for the dynamic policy enforcement that Zero Trust demands. Attempting to bolt on modern security can lead to slow performance, broken workflows, and frustrated users. An architect’s role is not just to design the security, but to design the *experience*. This means carefully planning a phased rollout, communicating changes clearly, and choosing solutions that automate verification in the background as much as possible.

The goal is to make the secure path the easiest path. This might involve implementing single sign-on (SSO) alongside stricter controls to reduce login fatigue, or using risk-based authentication that only prompts for extra verification when an access request is anomalous. Measuring and managing this user experience friction is not a soft skill; it is a critical metric for the success of any Zero Trust implementation. Ignoring it guarantees resistance and ultimately, a less secure state as users actively circumvent the controls you’ve worked so hard to build.

Device Posture Checks: Denying Access to Unpatched Laptops

In a Zero Trust model, identity verification extends beyond the user to the device itself. A valid user on a compromised device is an unacceptable risk. Device posture checking, also known as device health validation, is the mechanism that enforces this principle. Before any device—be it a corporate laptop, a personal mobile phone, or a contractor’s tablet—is granted access to a resource, it must first prove that it meets a minimum security baseline. This acts as a digital gatekeeper, ensuring that the endpoints connecting to your network are not Trojan horses.

A comprehensive device posture check assesses several key attributes in real-time. These checks typically include:

- Operating System Version: Is the OS up-to-date and patched against known vulnerabilities?

- Firewall Status: Is the device’s local firewall enabled and properly configured?

- Antivirus/EDR: Is an endpoint detection and response agent running, and are its definitions current?

- Disk Encryption: Is the device’s storage encrypted to protect data at rest?

- Presence of Unapproved Software: Is the device running unauthorized or blacklisted applications?

Only when a device passes all these checks is it deemed “healthy” and permitted to proceed with the access request. If any check fails, access is blocked, and the user is typically redirected to a remediation portal with instructions on how to bring their device into compliance. This is a non-negotiable component of Zero Trust.

This verification process is the moment before authentication, a critical checkpoint that confirms the integrity of the endpoint itself. Given that a significant number of attacks originate from compromised endpoints, treating every device as untrusted until proven otherwise is a foundational step in preventing breaches before they can even begin. It is the practical application of “never trust, always verify” at the hardware and software level.

The Firewall Misconfiguration That Exposes Internal Networks

The traditional network firewall, long the cornerstone of enterprise security, often becomes a critical point of failure in a legacy environment, not due to its technology but its configuration. Firewalls were designed to inspect “north-south” traffic—data moving in and out of the network. They are notoriously poor at monitoring “east-west” traffic, the communication that occurs between servers *inside* the network. This is where the most dangerous misconfiguration lies: the “any-any-allow” rule. Often implemented as a temporary fix or due to operational pressure, this rule permits unrestricted communication between internal network segments, effectively rendering the firewall useless for internal threat containment.

This oversight creates a superhighway for attackers. An initial compromise on a low-value, internet-facing server can quickly escalate as the attacker moves laterally, unhindered and unobserved, to more critical systems. The Unit 42’s 2024 Incident Response Report found that 38.6% of initial access incidents stemmed from vulnerabilities in internet-facing systems, highlighting just how common this entry vector is. Once inside, the lack of internal segmentation and the permissive firewall rules are what turn a minor incident into a major breach.

Legacy firewalls are inadequate for east-west traffic. Unfortunately, these devices were built to monitor traffic that moves from north to south, from client to server.

– Akamai, Network Segmentation and Microsegmentation White Paper

As Akamai notes, the very architecture of legacy firewalls makes them unfit for the Zero Trust era. A Zero Trust strategy requires dismantling these implicit trust relationships. This means auditing firewall rulesets to eliminate overly permissive rules, implementing micro-segmentation to act as distributed internal firewalls, and logging and inspecting east-west traffic with the same rigor as perimeter traffic. The goal is to transform the internal network from a trusted zone into a series of highly controlled, untrusted segments.

SMS vs Authenticator Apps: Why SMS 2FA Is No Longer Safe?

Multi-factor authentication (MFA) is a non-negotiable pillar of any security strategy. However, not all factors are created equal. For years, SMS-based two-factor authentication (2FA) was considered a significant step up from password-only protection. Today, it is widely regarded by security professionals as an insecure method that should be deprecated. The vulnerabilities of SMS lie in the underlying telephony network (SS7), which was never designed with security in mind. This makes SMS-based codes susceptible to two primary attack vectors: phishing and SIM swapping.

In a sophisticated phishing attack, an attacker can create a convincing fake login page that not only captures the user’s password but also prompts them for the SMS code. The user, believing they are logging into a legitimate service, unwittingly hands over their one-time code to the attacker, who can immediately use it to gain access. SIM swapping is even more insidious. Here, an attacker uses social engineering or bribes a mobile carrier employee to transfer the victim’s phone number to a SIM card in their possession. The attacker then receives all the victim’s calls and texts, including 2FA codes, allowing them to bypass security on multiple accounts.

In contrast, modern authenticator apps (like Google Authenticator or Microsoft Authenticator) and hardware security keys (like YubiKey) are far more secure. They generate time-based one-time passwords (TOTP) directly on the device, without transmitting them over the insecure SMS network. Hardware keys go a step further, requiring physical presence and resisting phishing through origin-binding protocols like FIDO2/WebAuthn. As an architect, mandating the move away from SMS 2FA to more robust MFA methods is a critical step in hardening your identity and access management.

Actionable Checklist: MFA Implementation Best Practices

- Assess device security posture: Verify device compliance, patch levels, and EDR agent health before granting access.

- Implement identity-based authentication: Use centralized identity providers integrated with MFA for all access attempts.

- Apply contextual risk assessment: Evaluate user location, device type, and access time to determine authentication requirements.

- Enforce least privilege access: Grant users only the minimum permissions necessary for their specific role and session.

- Deploy continuous verification: Re-authenticate users throughout their session, not just at initial login.

Key Takeaways

- Zero Trust in legacy networks is a containment strategy, not a replacement project. The goal is to build secure zones around insecure systems.

- True security comes from assuming a breach has already occurred and focusing on limiting lateral movement through aggressive micro-segmentation.

- Transitioning from broad VPN access to granular ZTNA and moving from SMS 2FA to app-based MFA are critical, non-negotiable technical shifts.

MFA Protocols: How to Balance Security With User Experience?

Implementing multi-factor authentication is the first step; optimizing it is the art. While the primary goal is to enhance security, a poorly designed MFA strategy can lead to significant user friction and resistance. The key to a successful deployment lies in balancing the strength of authentication with a seamless user experience. This is where adaptive, or risk-based, authentication becomes a critical component of a mature Zero Trust architecture. Rather than treating every login attempt identically, an adaptive MFA system assesses the risk of each session in real-time.

This risk assessment considers a wide range of contextual signals: Is the user logging in from a known device and a familiar location during normal work hours? Or is the request coming from an unrecognized device in a different country at 3 AM? For low-risk scenarios, the system can grant access seamlessly, perhaps even without an MFA prompt, relying on a passwordless mechanism like biometrics. For high-risk scenarios, it can step up the challenge, requiring a more robust factor like a hardware security key or even blocking the access attempt outright. This intelligent approach minimizes friction for legitimate users while raising the bar for potential attackers.

Implementing such a system can have a profound impact on security posture. According to the Okta’s State of Zero Trust Security 2023 report, the adoption of adaptive authentication can reduce the risk of an identity-related breach by as much as 85%. This demonstrates that better security and a better user experience are not mutually exclusive. As an architect, the goal should be to design an MFA ecosystem that is both formidable to an adversary and almost invisible to a trusted user during their day-to-day work. This balance is the hallmark of a well-executed Zero Trust strategy.

The path to a Zero Trust architecture within a legacy environment is a marathon, not a sprint. It requires a pragmatic, strategic, and often incremental approach. Start by assessing risk, begin with a pilot project, and build momentum. The next logical step is to formalize this strategy and secure the necessary buy-in to expand your initial success across the organization.