The real performance bottlenecks in your IT infrastructure are rarely where you’re looking; they’re hidden by misleading metrics and flawed conventional wisdom.

- Monolithic architectures aren’t inherently slow, and microservices aren’t a magic bullet; the bottleneck is often the communication overhead between them.

- High IOPS are a vanity metric if network or database latency is killing your application load times.

Recommendation: Focus on diagnostic precision: identify the true source of latency by analyzing traffic patterns and query behavior before applying a solution.

The alert screams. The application slows to a crawl. As an IT Operations Manager, your day is instantly derailed by another fire. The immediate pressure is to find a quick fix, and the usual suspects are rounded up: “We need more bandwidth,” “The database is slow,” “Let’s just throw more hardware at it.” This is the cycle of fire-fighting—a reactive loop fueled by treating symptoms instead of curing the disease.

Conventional wisdom points us toward obvious solutions. We upgrade our switches, scale our servers, and refactor our code. But the performance gains are often marginal, and the underlying fragility remains. The core issue is that infrastructure bottlenecks are masters of disguise. They don’t live where the monitoring dashboards point; they hide in the architectural assumptions and operational habits we’ve taken for granted.

What if the problem isn’t a lack of resources, but a fundamental misdiagnosis? This guide is written from the perspective of an infrastructure optimization consultant. My job isn’t to recommend a bigger server; it’s to find the precise, often counter-intuitive, weak link that’s causing a cascade of failures. We will move beyond the platitudes and equip you with a diagnostic mindset to uncover the true source of latency in your network, database, architecture, and memory management.

This article provides a structured approach to identifying and eliminating the hidden choke points that are truly holding your systems back. By examining each layer of the stack through a new lens, you’ll learn to spot the subtle signs of trouble and make targeted, high-impact improvements.

Summary: A Diagnostic Guide to Uncovering Hidden IT Infrastructure Bottlenecks

- Why Your 10GbE Switch Is the Choke Point of Your Network?

- How to Rewrite N+1 Queries That Freeze Your App?

- Monolith vs Microservices: Does Breaking It Down Always Improve Speed?

- The Swap Usage Mistake That Grinds Servers to a Halt

- Round Robin vs Least Connections: Which Balancer Algorithm Wins?

- Why Slow Deployment Pipelines Kill Your Market Responsiveness?

- Why High IOPS Don’t Always Guarantee Fast Application Load Times?

- Identifying Bugs: How to Catch Critical Errors Before Production Deployment?

Why Your 10GbE Switch Is the Choke Point of Your Network?

You’ve upgraded your data center to 10GbE. On paper, your network should be flying. The market for these powerful switches is growing rapidly, with a projected 34.5% CAGR between 2023 and 2030, so you’re in good company. Yet, applications still feel sluggish, and latency spikes persist. The problem isn’t the number on the box; it’s the nature of the traffic running through it. The “10GbE” figure is a misleading metric if your switch architecture can’t handle modern workloads.

The real culprit is often the explosion of “east-west” traffic—the communication between servers *within* your data center. This is a direct consequence of virtualization, hyper-converged infrastructure (HCI), and private cloud environments. Unlike traditional “north-south” traffic that goes in and out of the data center, this internal chatter places immense strain on the switch’s internal capacity, or backplane.

Case Study: The Impact of East-West Traffic

As virtualization and microservices become standard, server-to-server communication within a data center has grown exponentially. Virtual firewalls, load balancers, and distributed application components constantly relay data to each other. This creates a massive volume of east-west traffic that can easily overwhelm a 10GbE switch with a limited backplane capacity. Even if no single port is saturated, the aggregate traffic creates internal congestion and latency, effectively turning your high-speed switch into a major bottleneck that traditional monitoring might miss.

Therefore, looking only at port utilization gives you a false sense of security. The critical diagnostic question is whether your switch’s backplane and architecture were designed to handle the high-volume, low-latency demands of internal traffic patterns. If not, it’s the true choke point, no matter what the port speed says.

How to Rewrite N+1 Queries That Freeze Your App?

One of the most common and devastating application bottlenecks isn’t in the network or hardware, but deep within the code: the N+1 query problem. This occurs when your code retrieves a list of ‘N’ items and then executes a separate database query for each of those items to fetch related data. The result is a flood of small, inefficient queries that can bring a database to its knees, especially as ‘N’ grows. It’s a silent killer of performance.

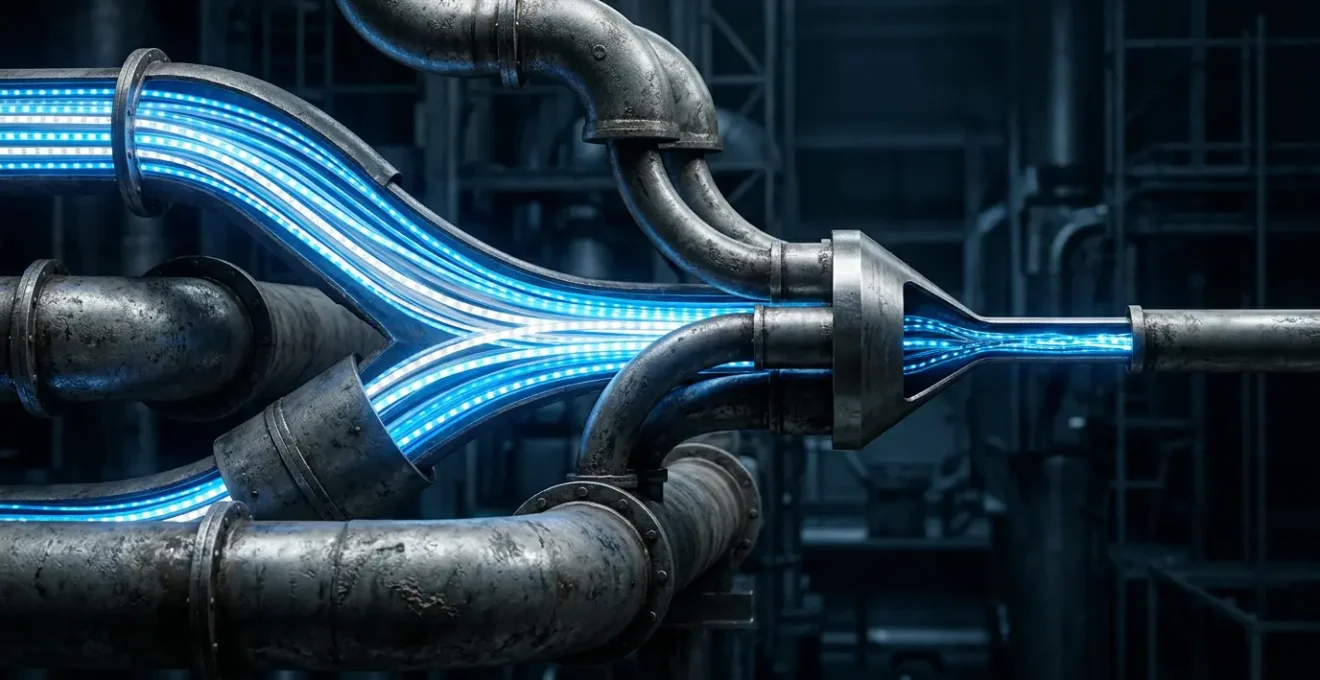

Fixing this issue isn’t just a minor tweak; it can lead to dramatic improvements. In many cases, optimizing an N+1 pattern into a single, efficient call can be transformative, as performance testing demonstrates that a 10x faster performance is achievable. The key is to shift from many small, “chatty” requests to one or two larger, “chunky” requests. The image below visualizes the difference between the inefficient sequential approach and an optimized, bundled operation.

As the visualization suggests, bundling requests is far more efficient. Instead of a death by a thousand cuts, the database handles a single, predictable operation. This reduces network round-trips, lowers database CPU load, and makes your application significantly more responsive. Identifying and eliminating these patterns should be a top priority for any operations or development team.

Action Plan: Eliminating N+1 Query Patterns

- Identify the Pattern: Look for loops in your code that execute database queries where each iteration performs a separate query, often with only the ID in the `WHERE` clause changing.

- Implement Eager Loading: Use your ORM’s built-in eager loading features (like ‘includes’ in Rails, ‘joinedload’ in SQLAlchemy, or ‘.Include()’ in Entity Framework) to fetch all necessary related data in an initial query.

- Utilize SQL JOINs: Manually rewrite N+1 queries into a single, comprehensive query using SQL `JOIN` statements to retrieve all parent and child data in one database round-trip.

- Batch Queries with IN Clauses: If a `JOIN` is not practical, gather the IDs from the initial query and use a second query with a `WHERE … IN (…)` clause to fetch all related records in just two total queries instead of N+1.

- Integrate Automated Detection: Add automated query plan analysis tools (like Bullet for Ruby on Rails or custom scripts) to your CI/CD pipeline to automatically fail builds that introduce new N+1 query patterns.

Monolith vs Microservices: Does Breaking It Down Always Improve Speed?

When a large, monolithic application becomes slow and difficult to manage, the modern playbook has a clear answer: break it down into microservices. The promise is alluring—smaller, independent services that are easier to develop, deploy, and scale. However, this migration is often treated as a universal cure for performance woes, which is a dangerous assumption. The reality is that a poorly planned shift to microservices can create more bottlenecks than it solves.

The primary hidden cost is network and communication overhead. In a monolith, function calls are fast, in-memory operations. In a microservices architecture, those same calls become network requests, complete with latency, serialization, and potential points of failure. Furthermore, the distributed nature of microservices dramatically increases complexity. This isn’t just a feeling; a 2024 DZone study found that teams using microservices spent 35% more time on debugging, chasing issues across multiple service boundaries.

Performance Reality Check: Monoliths vs. Microservices Under Load

Academic research has put the performance claims to the test. A study published in MDPI’s *Applied Sciences* journal compared monolithic and microservices architectures with identical hardware resources. The findings were revealing: under average load conditions, both architectures can exhibit similar performance. More surprisingly, under lower loads with fewer than 100 users, the monolithic application actually performed slightly better due to the absence of network overhead. This demonstrates that the performance bottleneck is often dictated by the specific use case and load profile, not by the architectural pattern itself.

The decision to break down a monolith should not be a knee-jerk reaction to slowness. It must be a strategic choice based on organizational needs for team autonomy and independent deployment cadences. For pure performance, a well-structured monolith can often outperform a chatty, poorly designed microservices ecosystem. The bottleneck may not be the monolith itself, but rather an inefficient module within it that could be optimized in place.

The Swap Usage Mistake That Grinds Servers to a Halt

Swap space on a server often feels like a free insurance policy. When physical RAM (Random Access Memory) runs low, the operating system can move less-used pages of memory to a designated space on the hard disk (swap), freeing up RAM for active processes. In theory, this prevents out-of-memory errors. In practice, relying on swap is one of the most insidious performance bottlenecks you can have. The moment your server starts actively swapping, you’ve already lost the performance battle.

The fundamental issue is speed. Disk I/O, even on fast SSDs, is incredibly slow compared to RAM. The Kubernetes documentation warns that swapping data back to memory is “many orders of magnitude slower” than reading it from RAM directly. This performance penalty isn’t linear; it’s a cliff. A server that is heavily swapping will experience a dramatic increase in I/O wait times, causing CPU cores to sit idle while they wait for data. The entire system becomes sluggish and unresponsive.

This problem is magnified in virtualized or containerized environments. One misbehaving application that consumes too much memory can force the host to swap out memory pages belonging to other, well-behaved applications. This creates a “noisy neighbor” problem, where the performance of your critical services is degraded by a completely unrelated process.

Enabling swap increases the risk of noisy neighbors, where Pods that frequently use their RAM may cause other Pods to swap.

– Kubernetes Documentation Team, Kubernetes Swap Memory Management Documentation

The consultant’s view is clear: swap is a diagnostic tool, not a resource. A spike in swap usage is an alarm bell indicating that a server is undersized for its workload or that there’s a memory leak in an application. The solution is not to allow swapping, but to identify the root cause of the memory pressure and fix it by adding more RAM or optimizing the application.

Round Robin vs Least Connections: Which Balancer Algorithm Wins?

Load balancers are a cornerstone of high-availability infrastructure, but simply having one isn’t enough. The algorithm it uses to distribute traffic is a critical decision that can either smooth out performance or inadvertently create bottlenecks. Two of the most common algorithms are Round Robin and Least Connections, and choosing the right one requires diagnostic precision.

Round Robin is the simplest method. It works like dealing a deck of cards, sending each new request to the next server in the list, in sequential order. This approach is fair, predictable, and works perfectly well if all incoming requests are uniform and take roughly the same amount of time to process. The problem arises when this isn’t the case. If one request is a quick API call and the next is a heavy report generation, Round Robin doesn’t care. It will blindly send traffic to servers that may already be bogged down with a long-running task.

This is where Least Connections proves its superiority for most modern applications. Instead of just following a sequence, this algorithm actively checks which server currently has the fewest active connections and sends the new request there. It’s like a smart bank manager directing you to the teller with the shortest line. This dynamic approach is far more efficient at balancing the actual workload across your server farm, especially when processing times for requests are variable. It prevents a single “heavy” request from causing a pile-up on one server while others sit idle.

So, which one wins? For simple, homogenous traffic (like serving static images), Round Robin is sufficient. But for nearly any dynamic application with variable request complexities, Least Connections is the clear winner. Choosing it isn’t just an optimization; it’s a fundamental requirement for building a resilient and truly balanced system. Using Round Robin in a complex environment is often a hidden bottleneck waiting to be exposed under load.

Why Slow Deployment Pipelines Kill Your Market Responsiveness?

A bottleneck isn’t always a server or a switch; sometimes, it’s a process. In today’s competitive landscape, the most dangerous bottleneck an organization can have is a slow CI/CD (Continuous Integration/Continuous Deployment) pipeline. This isn’t just a technical inconvenience for developers; it’s a direct throttle on your company’s ability to respond to the market. Every hour of delay in the pipeline is an hour your new feature, bug fix, or security patch is not in the hands of your customers.

Think of your deployment pipeline as the central artery of your business value stream. An idea is conceived, code is written, and then it enters the pipeline. If that pipeline is clogged with slow, flaky tests, manual approval gates, and inefficient build processes, the flow of value stops. A build that takes two hours to complete means you can, at best, react to a production issue or a competitor’s move in two-hour increments. A pipeline that requires manual intervention for every deployment creates a dependency on a single person’s availability.

This “latency to market” is a form of architectural debt that accrues interest with every passing minute. It creates a culture of fear around deployments, where releases are large, risky, and infrequent. This is the opposite of the agile, responsive posture needed to thrive. A fast, reliable, and automated pipeline enables small, frequent, and low-risk releases. It transforms deployment from a dreaded event into a routine, non-disruptive activity.

As a consultant, when I see a slow deployment pipeline, I don’t just see a technical problem. I see lost revenue, missed opportunities, and a significant competitive disadvantage. Optimizing your CI/CD process—by parallelizing tests, caching dependencies, and automating every step—is one of the highest-leverage investments you can make. It’s the bottleneck that, once fixed, unlocks the speed of your entire organization.

Why High IOPS Don’t Always Guarantee Fast Application Load Times?

In the world of storage, IOPS (Input/Output Operations Per Second) has long been the headline metric. We’re conditioned to believe that more IOPS equals better performance. Storage vendors boast about millions of IOPS, and IT managers often make purchasing decisions based on this single number. This is a classic case of a misleading metric. A high IOPS number is a vanity metric if it’s not paired with low latency, and it guarantees nothing about real-world application performance.

To understand why, let’s use an analogy. Imagine a highway with a toll plaza that can process 10,000 cars per hour (high IOPS). However, there’s a massive traffic jam 10 miles down the road (high latency). The ability to get cars *onto* the highway quickly is completely irrelevant if they immediately get stuck. The time it takes for a car to complete its journey (the latency) is what the driver actually cares about.

In storage, it’s the same principle. IOPS measures the number of read/write commands your storage system can handle per second. Latency measures the time it takes to complete a single one of those commands, typically in milliseconds. An application waiting for data from the storage system is blocked until that I/O operation completes. If latency is high, the application will feel slow, regardless of how many IOPS the underlying storage can theoretically handle. A system with 100,000 IOPS and 10ms of latency can be significantly slower for a user than a system with 50,000 IOPS and 1ms of latency.

The true bottleneck is almost always latency. This can be caused by network congestion in a SAN, inefficient data layouts, or contention on the storage controller. Focusing solely on IOPS is looking in the wrong place. The critical metric to monitor is latency. If your application is slow and storage latency is high, you’ve found your bottleneck. If latency is low but the application is still slow, the bottleneck is elsewhere—likely in the database queries or application code.

Key Takeaways

- Challenge your metrics: High-level numbers like 10GbE or IOPS can be misleading. Always investigate the underlying factors like backplane capacity and latency.

- Analyze traffic patterns: The nature of your workload, whether it’s east-west traffic overwhelming a switch or an N+1 query pattern flooding a database, is often the true bottleneck.

- Architecture is context-dependent: A monolith isn’t inherently bad, and microservices aren’t a panacea. The right choice depends on your specific performance profile and organizational needs.

Identifying Bugs: How to Catch Critical Errors Before Production Deployment?

We’ve dissected bottlenecks in hardware, software, and process, but the most disruptive and costly bottleneck of all is a critical bug that makes it into production. It halts customer activity, erodes trust, and triggers an all-hands-on-deck fire-fight that derails all other productive work. The ultimate form of infrastructure optimization, therefore, is not just about speed, but about stability. The goal is to build a system that actively prevents bugs from being deployed in the first place.

This requires a cultural and procedural shift, known as “shifting left.” Instead of relying on a final QA phase to catch errors, quality assurance becomes an automated, integral part of the entire development lifecycle. The pipeline we discussed earlier becomes the primary defense mechanism. It’s no longer just a conveyor belt for code; it’s a series of automated quality gates. Every code commit should automatically trigger a suite of unit tests, integration tests, and static code analysis tools.

If any test fails, the build is automatically rejected. The bug is caught within minutes of being written, when it is cheapest and easiest to fix. This automated feedback loop is critical. It moves bug detection from a manual, end-of-cycle activity to an immediate, developer-centric one. Furthermore, incorporating security scanning (SAST/DAST) and performance testing into the pipeline ensures that code is not just functional, but also secure and efficient, before it ever gets near a production environment.

Building this robust, automated safety net is the final piece of the puzzle. It’s the proactive strategy that allows you to move away from reactive fire-fighting. By catching critical errors early and automatically, you eliminate the most disruptive bottlenecks and create a stable foundation upon which you can truly optimize for performance.

Stop fire-fighting. Start diagnosing. Apply these principles to identify one true bottleneck in your system this week and begin the shift from reactive operations to proactive, sustainable infrastructure optimization.