Relying on multi-cloud for resilience is a trap; true uptime comes from mastering the failure points between providers, not just using more of them.

- Single-provider outages are increasing in frequency and duration, making dependency a systemic risk.

- The most critical vulnerabilities lie in the interconnects: VPN tunnels, data synchronization, and traffic routing.

- A resilient architecture demands deliberate, paranoid design focused on isolating failure domains and minimizing blast radius.

Recommendation: Shift your focus from evaluating individual cloud provider features to obsessively engineering and testing the interstitial plumbing that binds them together.

The 3 AM phone call. The dashboard bathed in red. A critical application is down, and every second of downtime is costing millions. In today’s landscape, the default answer to this nightmare is “go multi-cloud.” It’s presented as a panacea, a simple checkbox for business continuity. But this comforting notion is dangerously incomplete. Many organizations adopt multiple clouds only to discover they’ve merely duplicated their infrastructure, not their resilience.

The hard truth is that multi-cloud doesn’t eliminate single points of failure; it just moves them. These new vulnerabilities fester in the complex, often unmonitored, spaces between your cloud environments. They are the misconfigured VPN tunnels, the flawed data replication strategies, and the naive routing rules that turn a regional provider outage into a catastrophic, cascading failure across your entire system. The real work of resilience isn’t in choosing AWS and Azure; it’s in preparing for the day they both have problems, or the connection between them breaks.

This is not a guide about the virtues of cloud diversification. This is a manual for the paranoid, for the enterprise architect tasked with guaranteeing uptime when everything is designed to fail. We will dissect the most common and treacherous multi-cloud failure points. We will move beyond the platitudes of redundancy and provide a framework for building an infrastructure that is not just distributed, but truly resilient and capable of surviving the inevitable regional outage.

This article provides a comprehensive blueprint for enterprise architects. It details the risks, compares the strategic choices, and provides actionable frameworks to build a truly resilient multi-cloud system.

Summary: A Blueprint for Multi-Cloud Resilience Against Outages

- Why Relying on a Single Cloud Provider Risks Your Business Continuity?

- How to Establish Secure VPN Tunnels Between AWS and Azure?

- Multi-Cloud vs Hybrid Cloud: Which Offers Better Redundancy?

- The Data Sync Mistake That Corrupts Multi-Cloud Databases

- RTO vs RPO: Which Metric Dictates Your Backup Strategy?

- Arbitraging Cloud Costs: 3 Tools to Spot Cheaper Compute Zones

- Route Optimization: Ensuring Local Traffic Stays Local

- Enterprise Multi-Cloud Architectures: How to Unify Fragmented Systems?

Why Relying on a Single Cloud Provider Risks Your Business Continuity?

The belief that a major cloud provider is “too big to fail” is a relic of a bygone era. The reality is that all providers experience outages, and these events are becoming more impactful. According to recent cloud outage monitoring data, there was an 18% increase in critical cloud service interruptions in the first half of 2024 compared to the previous year, with their duration growing by nearly 19%. This isn’t a theoretical risk; it’s a statistical certainty that your provider will have a bad day. When it does, your entire business is at its mercy.

The core danger is systemic risk propagation. A single misconfigured update or a localized hardware failure can trigger a cascading effect across a provider’s global infrastructure. The blast radius of such an event can be enormous, affecting not just one service but the entire ecosystem of authentication, storage, and compute that your applications depend on.

Case Study: The Azure Global Outage of July 2024

On July 19, 2024, a misconfigured update within Azure’s infrastructure initiated a catastrophic, cascading failure that lasted over six hours. The failure crippled global authentication systems, locking users out and severing access to essential services like Teams, Outlook, and SharePoint. The impact was devastating: airlines were grounded, financial exchanges halted, and hospital operations were disrupted. This incident, which caused billions in productivity losses, serves as a stark reminder of how a single configuration change can propagate across an entire cloud provider’s global regions, demonstrating the immense vulnerability of a single-vendor dependency.

Relying on a single provider creates a single domain of failure. Even with multi-region or multi-zone deployments within that provider, you are still subject to failures in their global control plane, authentication systems, or core networking. True business continuity requires failure domain isolation, where an outage at one provider has a near-zero chance of impacting services running on another. Anything less is not a disaster recovery strategy; it’s a gamble.

How to Establish Secure VPN Tunnels Between AWS and Azure?

The secure VPN tunnel is the foundational element of multi-cloud connectivity, but it’s also a primary “interconnect choke point.” For an architect, the goal is not to execute the CLI commands but to understand the inherent complexity and potential failure modes. Setting up a resilient, high-availability connection between AWS and Azure isn’t a single action; it’s a multi-stage project involving numerous components on both sides, each a potential point of failure.

You must orchestrate Virtual Network Gateways, Customer Gateways, Local Network Gateways, and Site-to-Site VPN Connections, ensuring that IP ranges, shared keys, and routing policies are perfectly synchronized. A single mismatch in IKEv2 protocol settings or a typo in a CIDR block can render the entire connection useless. The real challenge is designing for failure by implementing redundant tunnels and ensuring that failover is automatic and tested, not a manual process during an emergency.

The decision to use a standard internet-based VPN versus a dedicated private connection is a critical architectural trade-off between cost, performance, and reliability. This choice directly impacts your RTO and application performance.

A standard Site-to-Site VPN offers a cost-effective solution for non-critical workloads, but its performance is subject to the whims of the public internet. For mission-critical applications requiring guaranteed throughput and low latency, a dedicated connection is non-negotiable, as detailed in this comparative analysis of connectivity options.

| Connectivity Option | Bandwidth Range | Monthly Cost (Entry Level) | Latency | Use Case |

|---|---|---|---|---|

| Site-to-Site VPN (Azure VPN Gateway + AWS VPN) | 1.25 Gbps – 10 Gbps | ~$150-300 | Variable (Internet-dependent) | Cost-effective for moderate traffic, non-mission-critical workloads |

| Azure ExpressRoute + AWS Direct Connect | 50 Mbps – 100 Gbps | $300-$50,000+ | Low, predictable | High throughput, SLA-backed, mission-critical workloads requiring dedicated private connectivity |

Action Plan: Auditing Your AWS-to-Azure VPN Resiliency

- Gateway Provisioning Audit: Confirm Azure’s Virtual Network Gateway is provisioned and its GatewaySubnet is correctly sized (minimum /27). Verify that the ~30 minute provisioning time is factored into recovery plans.

- Endpoint Configuration Check: Inventory all AWS Customer Gateways. Verify that each one accurately points to a public IP of a corresponding Azure VPN Gateway and is attached to the correct VPC.

- High Availability Validation: Confront your current setup. Do you have redundant AWS Site-to-Site VPN Connections? Are they configured with static routing pointing to two distinct Local Network Gateways in Azure for true high availability?

- Key & Protocol Consistency: Audit the connection configurations on both platforms. Is the IKEv2 protocol consistently used? Are the pre-shared keys from the AWS configuration file correctly implemented in the Azure connection settings?

- Tunnel Status Monitoring: Review your monitoring dashboards. Are you actively tracking the tunnel status on both the AWS and Azure sides? Do your alerts validate that the connection shows as ‘UP’ and that traffic is flowing, not just that the configuration exists?

Multi-Cloud vs Hybrid Cloud: Which Offers Better Redundancy?

The terms “multi-cloud” and “hybrid cloud” are often used interchangeably, but from a disaster recovery perspective, they represent fundamentally different redundancy models. Hybrid cloud typically involves connecting a private, on-premises infrastructure to one or more public clouds. Its primary redundancy benefit is against a public cloud provider failure, as you can potentially fall back to your own data center. However, this model makes your on-premise infrastructure the ultimate single point of failure.

Multi-cloud, on the other hand, involves using services from two or more public cloud providers (e.g., AWS and Azure). This model is inherently designed to provide redundancy against the failure of an entire provider. By architecting applications to run active-active or active-passive across different hyperscalers, you create true failure domain isolation. An outage on AWS should, in theory, have no impact on your Azure deployment.

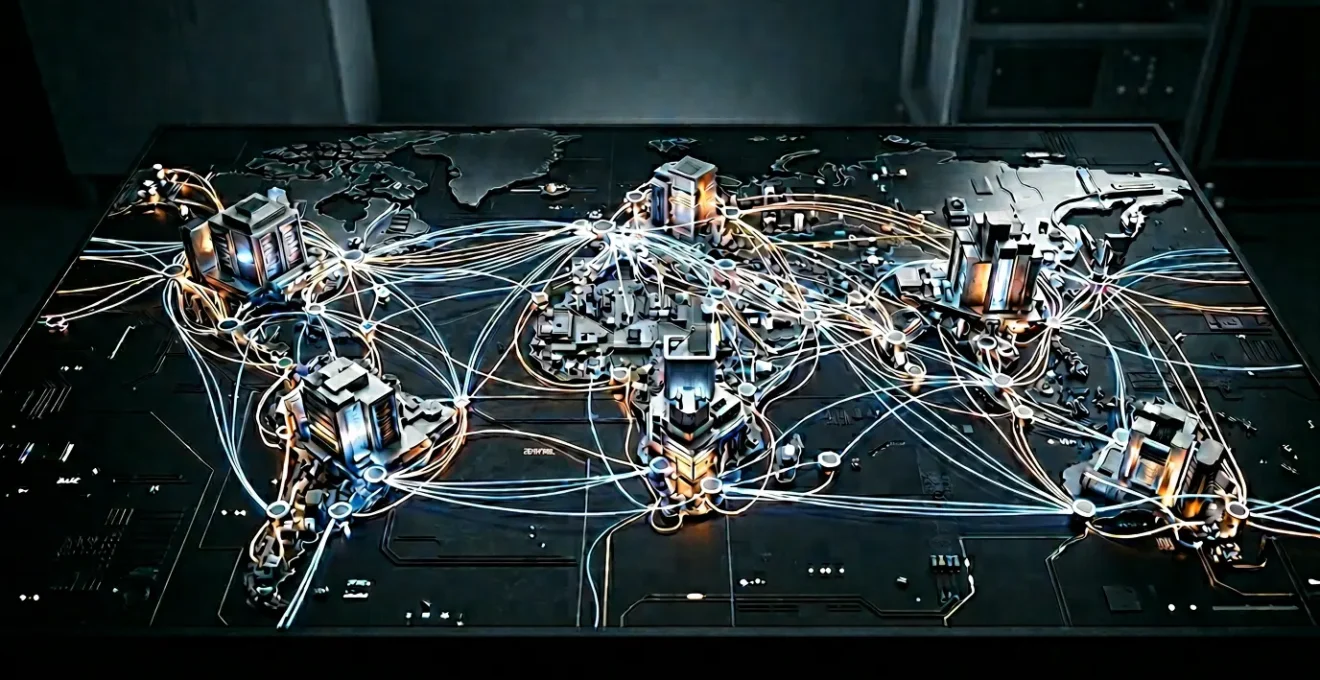

This paragraph introduces a complex concept. To well understand, it is useful to visualize its principal components. The illustration below breaks down this process.

As this schematic diagram shows, each stage plays a crucial role. The data flow is thus optimized for performance. Indeed, recent cloud downtime analysis reveals that multi-cloud environments experience 17% fewer total outages than single-vendor deployments. However, this advantage is not automatic. It is earned through meticulous architecture that treats each cloud as an independent failure domain, connected by well-defined and resilient links. Without this discipline, a multi-cloud setup can become a more complex and fragile version of a single cloud.

The Data Sync Mistake That Corrupts Multi-Cloud Databases

The most insidious failure in a multi-cloud environment is not a downed server; it’s silent data corruption. The single biggest mistake is treating database synchronization as a simple background replication task. Traditional async replication between clouds is a recipe for disaster. During a network partition or “split-brain” scenario, where each cloud environment thinks it’s the master, you can end up with two divergent sets of data. Attempting to reconcile these datasets after the fact is often impossible, leading to permanent data loss.

This is not a hypothetical problem. Imagine a financial transaction being committed in your AWS region while your Azure region is disconnected. When the connection is restored, which transaction is correct? If a user updates their profile on Azure while the same profile is being deleted on AWS, what is the source of truth? Relying on timestamps (which can suffer from clock skew) or manual reconciliation is not a viable strategy for critical systems. The very process designed to provide redundancy becomes the agent of data corruption.

The solution is to move away from simplistic replication and adopt database technologies designed from the ground up for distributed, multi-cloud environments. These systems don’t just copy data; they manage a distributed consensus protocol (like Raft or Paxos) to ensure that a write is only considered “committed” when a quorum of nodes across different regions or clouds agrees. This prevents split-brain scenarios and guarantees data consistency, even in the face of network partitions or regional outages.

Case Study: Multi-Cloud Database Architecture in FinTech

Financial technology firms have pioneered the use of distributed SQL databases like CockroachDB to achieve resilience. By deploying a single logical database across multiple regions and even multiple cloud providers, they can withstand the failure of an entire region or cloud without data loss or downtime. For developers, the complex multi-cloud setup behaves like a single-instance database, while the system transparently handles data distribution, replication, and consistency. This approach, highlighted in fintech multi-cloud architecture implementations, allows these companies to meet strict data sovereignty regulations while ensuring extreme resilience, effectively eliminating data sync as a single point of failure.

RTO vs RPO: Which Metric Dictates Your Backup Strategy?

In disaster recovery, Recovery Time Objective (RTO) and Recovery Point Objective (RPO) are not just technical acronyms; they are the contractual promises you make to the business about service availability. Misunderstanding them is the first step toward a failed recovery. RTO dictates the ‘how long’: the maximum acceptable time your application can be down after a disaster. RPO dictates the ‘how much’: the maximum amount of data loss the business can tolerate, measured in time (e.g., 15 minutes of transactions).

It’s crucial to understand that RPO dictates your backup strategy, while RTO dictates your recovery infrastructure. An RPO of 5 minutes demands a backup or replication frequency of at least every 5 minutes. An RTO of 30 minutes requires an infrastructure (e.g., hot or warm standby sites) that can be fully operational within that timeframe. The most common mistake is defining an aggressive RTO/RPO without committing to the architectural complexity and cost required to achieve it.

This diagram introduces a complex concept. To well understand it, it is useful to visualize its principal components. The illustration below breaks down this process.

As the diagram illustrates, achieving near-zero RTO and RPO requires a fully active-active multi-cloud architecture with a distributed database, which is the most expensive and complex option. A higher RTO/RPO might be serviceable with a simpler pilot light or backup-and-restore strategy. The choice is always a trade-off. As recent cloud availability research demonstrates, the architectural investment pays off: applications architected across multiple availability zones experience 19 minutes less downtime than single-zone deployments. This benefit increases to 36 minutes less downtime when architected across entire regions, directly impacting your ability to meet RTO.

Arbitraging Cloud Costs: 3 Tools to Spot Cheaper Compute Zones

While the title suggests “arbitrage,” a seasoned architect sees this as “systematic cost avoidance.” In a multi-cloud environment, cost management is not a secondary concern; it is an active and continuous part of the architecture. The most dangerous costs are the ones you don’t see coming, and chief among them are data egress fees. Moving data out of a cloud provider’s network is never free, and in a multi-cloud architecture where data is constantly flowing between providers for replication, backup, and processing, these costs can explode.

Industry analysis shows that egress fees can make up 10% to 15% of total cloud costs, and often much more. Your architecture must be designed to minimize this traffic. This means processing data as close to its source as possible and only moving aggregated results, or leveraging direct connections like AWS Direct Connect or Azure ExpressRoute, which offer lower and more predictable data transfer rates.

Beyond egress, compute and storage costs can vary significantly between providers and even between different regions of the same provider. True cost arbitrage involves using tools that provide real-time cost visibility across your entire multi-cloud estate. Three key categories of tools are essential:

- Cloud Cost Management Platforms (e.g., Cloudability, Flexera): These tools provide a unified dashboard to track spending across AWS, Azure, GCP, and others. They can identify unused resources, recommend rightsizing for VMs, and alert on cost anomalies, but their real power is in showing where your money is going at a granular level.

- Spot Instance Brokers (e.g., Spot by NetApp): For non-critical, fault-tolerant workloads, spot instances offer up to 90% savings over on-demand prices. These tools automate the process of bidding for and managing spot capacity across multiple clouds, dynamically shifting workloads to wherever the cheapest compute is available at that moment.

- FinOps (Financial Operations) Frameworks: This is less a tool and more a cultural practice, supported by tools like Terraform and cost policy checkers. It involves embedding cost awareness directly into the development and operations lifecycle, allowing teams to see the cost implications of their architectural decisions before they are deployed.

The cost of moving data between clouds for replication or processing is a major factor that must be accounted for in any multi-cloud budget. As the following table illustrates, these “silent” costs can quickly accumulate.

| Data Transfer Scenario | Cost per GB | Example: 10TB Monthly Transfer | Impact |

|---|---|---|---|

| Cross-Region Replication (AWS S3) | $0.02 – $0.09 | $200 – $900/month | Silent cost accumulation from automated replication |

| Internet Egress (General) | Up to $0.09 | Up to $900/month | Serving content to end users, API responses |

| Multi-Cloud Transfer (AWS to Azure) | $0.02 – $0.09 + destination ingress | $200+ per direction | Significant expense for multi-cloud architectures |

Route Optimization: Ensuring Local Traffic Stays Local

In a globally distributed multi-cloud architecture, inefficient traffic routing is a silent killer of performance and a needless inflation of cost. The goal of route optimization is simple: ensure user requests are served by the closest possible endpoint, and that backend traffic between services does not take an unnecessary and costly trip across the globe. This is often referred to as avoiding “traffic tromboning” or “hairpinning.”

For user-facing traffic, this is achieved through Global Server Load Balancing (GSLB) or DNS-based routing services like AWS Route 53 or Azure Traffic Manager. These services can route users based on latency, geography, or endpoint health. For example, a user in Europe should be transparently directed to your European deployment on Azure, while a user in Asia is sent to your Asian deployment on AWS. If the Azure deployment fails, the GSLB should automatically redirect all European traffic to the next-best location, containing the blast radius of the outage.

For backend, service-to-service traffic, the challenge is ensuring that communication between microservices within the same region or cloud stays within that provider’s network. This is where proper VPC/VNet peering and private endpoints are critical. Routing traffic out to the public internet and back in just to talk to a neighboring service is not only slow and insecure, it also racks up unnecessary data egress charges. Effective routing significantly limits the scope of an outage. As cloud downtime statistics reveal, regional redundancy reduced the impact area by 60% during major hyperscaler disruptions in 2024. This is a direct result of intelligent routing that isolates failures.

Key Takeaways

- Single-provider dependency is no longer a calculated risk; it is a systemic vulnerability with a statistically increasing probability of failure.

- True resilience is not achieved by duplicating infrastructure, but by obsessively engineering the interstitial connections—VPNs, data sync, and routing—which are the new primary failure points.

- Architectural choices must be driven by business-defined metrics (RTO/RPO) and an awareness of hidden costs like data egress, treating resilience as a feature to be designed, not an assumed benefit.

Enterprise Multi-Cloud Architectures: How to Unify Fragmented Systems?

A mature multi-cloud strategy is not about randomly scattering workloads across providers. It is about a deliberate, unified approach that treats multiple clouds as a single, federated platform. This requires moving beyond a simple “lift and shift” mentality to a model of decision-based placement, where each workload is deployed to the cloud that best suits its specific needs—for performance, capability, or cost. This is the path from a fragmented collection of systems to a cohesive enterprise architecture.

Achieving this unification hinges on one core technology: abstraction. You must create a layer of abstraction above the disparate cloud APIs and infrastructure models. For applications, this is most commonly achieved through containerization with Docker and orchestration with Kubernetes. Kubernetes provides a consistent runtime environment, allowing you to move containerized applications between AWS (EKS), Azure (AKS), and GCP (GKE) with minimal changes. For infrastructure, the abstraction layer is Infrastructure as Code (IaC) using tools like Terraform.

Case Study: Goldman Sachs’ Performance-Based Workload Allocation

Goldman Sachs exemplifies a sophisticated multi-cloud strategy by implementing a primary and secondary cloud model. Their trading systems run on AWS to leverage its mature financial ecosystem, while intensive AI/ML workloads are placed on Google Cloud to capitalize on its strengths in model training. As detailed in this analysis of multi-cloud examples, this architecture is not random; it follows a decision-based placement for each workload’s specific requirements. Containerization with Kubernetes ensures portability and rapid recovery, leading to a 40% improvement in analytics speeds while maintaining strict, unified data controls across both environments.

By defining your infrastructure in code, you create a single source of truth that can be used to provision resources across any provider, enforcing security, governance, and tagging standards automatically. This approach is fundamental to managing complexity at scale.

Deutsche Bank solved multi-cloud integration by empowering its 4,000 developers to use a single HashiCorp Terraform workflow, abstracting disparate cloud APIs for rapid, self-service provisioning while enforcing security and governance.

– HashiCorp case study, Multi-Cloud Architecture: Proven Strategies for Resilience – Fluence

This is the end goal: to empower development teams with the speed and agility of the cloud, while the central architecture team maintains control and ensures resilience through a unified, abstracted control plane.

The time to find the holes in your multi-cloud strategy is now, through deliberate chaos engineering and rigorous testing—not during a 3 AM global outage. Your journey to 99.999% uptime begins with the assumption of failure. Start architecting for it today.